Steps Ahead

Natural language generation: No longer a fantasy

By Matthew Hodgson, CEO and founder of Mosaic Smart Data

Imagine if your highest performing, most experienced quant could write all the reports your bank generates. And now imagine those reports could be produced in seconds and distributed across the bank. The gains in efficiency, performance and business insight would have an almost immediate impact on your bottom line.

“NLG MARKET IS EXPECTED TO MORE THAN DOUBLE IN VALUE FROM USD 322.1 MILLION IN 2018 TO USD 825.3 MILLION BY 2023”

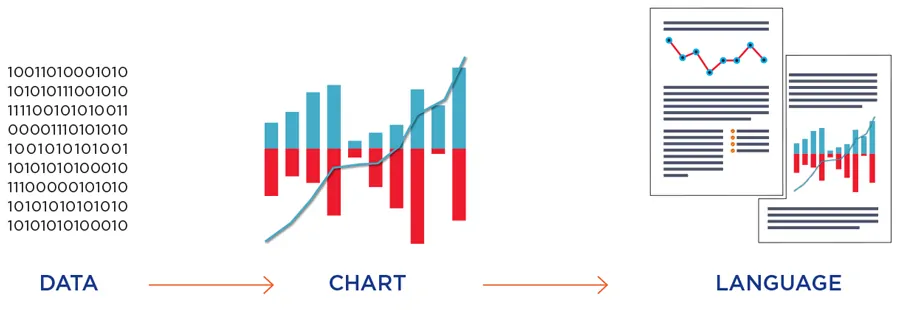

Advancements in natural language generation (NLG), a software process that automatically transforms data into a written narrative, is making lightning-fast generation of expert business intelligence a reality in today’s financial markets.

The potential for NLG to transform the way analyst reports are generated and distributed is no secret in the fintech industry. As more banks seek to equip themselves with the tools to instantly turn data into relevant, intuitive and timely information, the NLG market is expected to more than double in value from USD 322.1 Million in 2018 to USD 825.3 Million by 2023.1

The drivers behind this demand are clear. Banks face increasingly complex challenges every day, competition is fierce and the effect is that profits are harder to generate than ever before. Meanwhile, increasing regulation and transparency requirements are an ever-growing burden.

Banks already have the data they need to overcome these challenges, but converting it into intelligence that can support informed decision-making ties up their data experts or quants with routine and repetitive tasks. Given the exponential growth of available data, surfacing the most relevant insights is the most worthwhile goal. NLG can automatically turn this data into human-friendly prose.

NLG has existed in more basic forms for some time, but only recently has the technology become advanced enough for application in sectors such as capital markets. A simple, early example of NLG usage, albeit in consumer rather than wholesale financial markets, is a system that automatically generates form letters, such as a letter telling a consumer they have hit their credit card spending limit.

These simple systems use templates not unlike a Word document mail merge, but today’s increasingly sophisticated NLG systems dynamically create text using either explicit models of language or statistical models derived by analysing human-written texts.

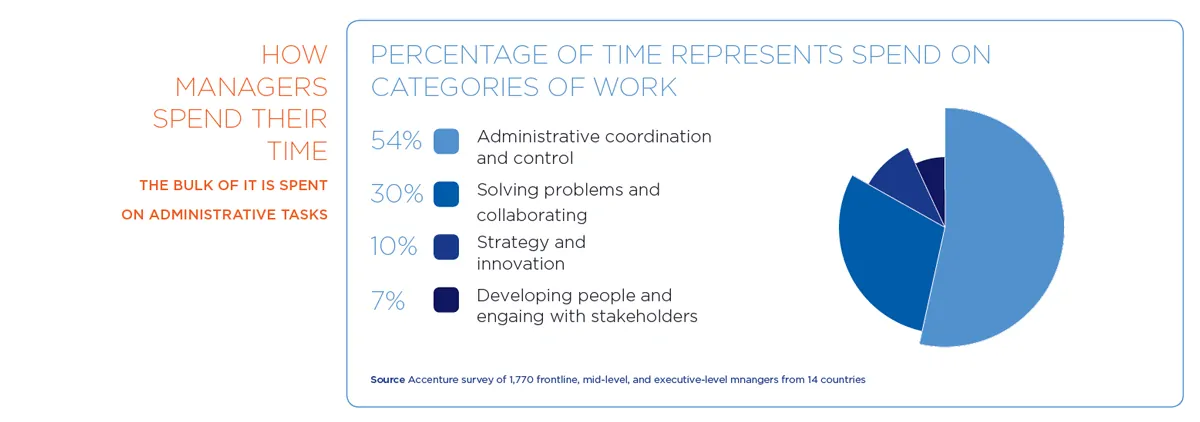

For banks, this holds the key to major improvements in efficiency for their business intelligence departments and beyond. As the amount of data available continues to grow, banks are increasingly overwhelmed with report writing, presentations, client reviews, sales performance and regional reviews – the list goes on and on.

Teams of analysts are required to manually produce these reports. Not only is this a significant staffing cost for banks, but many of these analysts are not being used to their full potential because much of their time is spent on menial but time-consuming tasks. Nevertheless, marrying data with narrative is an essential component of a bank’s business intelligence offering and is critical to driving informed decision-making across the bank.

NLG technology can automate the most tedious and costly data-driven reports and dramatically increase the productivity and efficiency of teams across the bank. When integrated into a comprehensive data analysis infrastructure, it can generate intuitive prose that reads as if it were written by the best quant in the house at the click of a button.

Imagine having the best quants sit down with each team member and generate custom reports for each of them: analysing any graphs or data they need to understand and generating text which can point to the most salient features and outliers to provide the narrative to accompany the data. Now imagine them taking less than a few seconds to write the full report. That is the power of NLG.

These reports can be prepared with enough variance in language and style to keep the copy fresh and engaging to the reader. In the future, it is certainly likely to include predictions. However, as an intermediate step, a real time analytics framework provides the benefits of narrative description which can be refreshed on the most current data.

Against this backdrop, the stage is clearly set for the broader trend of automation in financial services to begin delivering efficiencies to banks’ business intelligence units. But to realise NLG’s full potential, banks must first put the core foundations of effective data analysis in place – and this begins with aggregating data from across the organisation and ensuring it is translated into a structured, standardised dataset. Without putting these building blocks in place, NLG will not be able to pull unstructured data and generate written text that is useful to its intended audience.

AGGREGATION AND STANDARDISATION: THE BUILDING BLOCKS OF NLG

The electronification of major financial markets such as fixed income and foreign exchange means there is more data available to banks, and the falling cost of computing power means that data has never been more cost effective to store or analyse. That doesn’t mean, however, that many banks are taking full advantage of its potential. Despite much talk about the power of technologies such as NLG, most trade data goes unused and its insights left untapped.

Financial institutions already have a treasure trove of powerful transaction data in-house, it is time to make full use of it, instead of paying exorbitant fees to exchanges.

Currently, many banks don’t have the underlying foundations to make the best use of the data. The data from different venues is captured in different messaging formats, there are different data fields and data is often not shared across silos. It is simply not possible for automated NLG technology to produce meaningful reports unless data is standardised, and banks risk missing critical insights if they do not aggregate all data from across the entire organisation.

The answer lies in platforms that provide a comprehensive, tailored analytics service, supported by solid data foundations. Aggregation and normalisation of data across both voice and electronic trades, carried out with the end goal of generating highly relevant insights to drive profitable decision-making, is a crucial first step in truly unlocking the power of advanced machine learning and data tools such as NLG.

Similarly, given their already stretched resources, banks do not want to have to spend months integrating their data sources and writing complex algorithms to enable their chosen NLG tool to work effectively. Partnering with specialist data technology firms that have the knowledge and experience to carry out the heavy lifting on their behalf can greatly minimise the burden on banks.

“THE ANSWER LIES IN PLATFORMS THAT PROVIDE A COMPREHENSIVE, TAILORED ANALYTICS SERVICE, SUPPORTED BY SOLID DATA FOUNDATIONS“

Mosaic Smart Data: the holistic solution

At Mosaic Smart Data we provide a comprehensive data analytics solution that enables banks to unite their entire fixed income, currencies and commodities trading data into one powerful, real-time viewpoint. This enables each function – from business intelligence to back-office compliance, traders, sales desks and managers – to see exactly what is going on in the FICC business in real-time for the first time and at any level of detail, from the macro to the atomic depending on the users’ entitlements.

Built by a team with decades of experience in FICC and data analytics, our MSX® platform enables financial institutions to establish a holistic view of voice and electronic client flows by applying effective aggregation and standardisation to a multitude of trade data.

MSX® then deploys its own highly sophisticated analytics to this normalised data set, as well as providing a private algorithm container for the bank to house its own quantitative analytics. These analytics allow users to understand all aspects of the data, such as price, market share, profit and loss as well as trade execution and TCA on individual trades.

We have recently upgraded the platform with advanced NLG functionality, providing a natural extension to our existing offering. The technology enables MSX® users in business intelligence departments to take advantage of the fact their data has already been fully aggregated from all sources across the bank and rigorously standardised. It enables them to generate automated reports that deliver insight and colour to readers, allowing them to make better-informed decisions about client interactions and ultimately improving FICC trade and sales performance.

These reports analyse a wide range of charts, such as sunbursts, bar charts, trade flow maps and price graphs, providing the narrative to quickly draw the most important features of the graph to the user’s attention.

Reports can be generated which reflect each user’s view of the underlying data as configured in their MSX dashboard, enabling thousands of unique narratives to be produced in the time it takes an analyst to write a single report. MSX® transforms the structured datasets already streaming through the platform into unique and variable descriptions, even with similar underlying data, ensuring the content remains engaging for the reader. Language can also be tailored specifically to fit the preferences of the particular end user.

“IMAGINE IF YOUR HIGHEST PERFORMING, MOST EXPERIENCED QUANT COULD WRITE ALL THE REPORTS YOUR BANK GENERATES”

Efficient integration is critical

Whereas previously, implementations of advanced technology such as NLG could take months or even years of work by a bank’s in-house software developers and analysts – and return on investment was uncertain – by working with specialist firms like Mosaic Smart Data, banks benefit from visible and predictable costs as well as rapid deployment.

The art of integration is a complex skill that technology vendors are able to fine tune through working with a diverse spectrum of customers. This enables banks to quickly see results from their investment and increase the efficiency of their whole FICC team.

As the rise of automation and artificial intelligence permeates virtually every corner of capital markets, there is no doubt that a technology as effective and innovative as NLG is the future of business intelligence for banks.

For it to be deployed with maximum impact, however, a bank must first step back and take a holistic view of its data analytics capabilities. Only with the correct foundations in place, integrated efficiently and securely across the organisation, can the full benefits of NLG start to be realised. But, once they are, imagine the possibilities they will unlock.

1 www.marketsandmarkets.com/PressReleases/natural-language-generation.asp